Have you ever considered supplementing your development skills with some data science? Expand your range and follow this quick tutorial on how to build your own predictive models using open datasets, Jupyter notebooks and Sequential models.

Pick Your Data Game

Your first step in learning data science is to find a dataset that fits your interests. A robust platform where you can find inspiration is Kaggle, a web-based data science environment for machine learning enthusiasts. It offers, not only open datasets, but also competitions and even free micro-courses.

Maybe you want to change the world and help with scientific research. Maybe you’d like to jump in to win $65,000 in a sponsored challenge. Or maybe you’d like to start with some machine learning techniques. There is plenty of data to search through and there always are a couple of open contests to enter.

For this tutorial we are going to use a dataset from a competition called “Histopathologic Cancer Detection.” It is very important to understand not only the data we are going to work with, but also the main purpose of this challenge. Looking at our example, we read the following: Identify metastatic tissue in histopathologic scans of lymph node sections.

In layman’s terms, the goal is to determine/recognize/discover if there is a sign of tumor tissue in a given image based on a dataset of lymph node scans. This is enough information to know that our model will have to answer this question in a binary manner: if there is a metastatic tissue in a given image, the answer is yes; if there is not, the answer is no.

Choose a Game Plan

There are two main types of machine learning, supervised and unsupervised.

In our case, we have a training set and a ground truth–train labels. In other words, we have prior knowledge of what the output values for our training images should be. Therefore, if we have a ground truth, then we know we are dealing with supervised machine learning.

The goal here is to learn a function based on example input-output pairs. Having this function, the task is to map a new given input to a predicted output. Sound reasonable? Let’s jump into the competition sources and see if we have what we need.

Train set: This set contains the data you will train your model on. It’s the set of plain images for which we already know the correct answer.

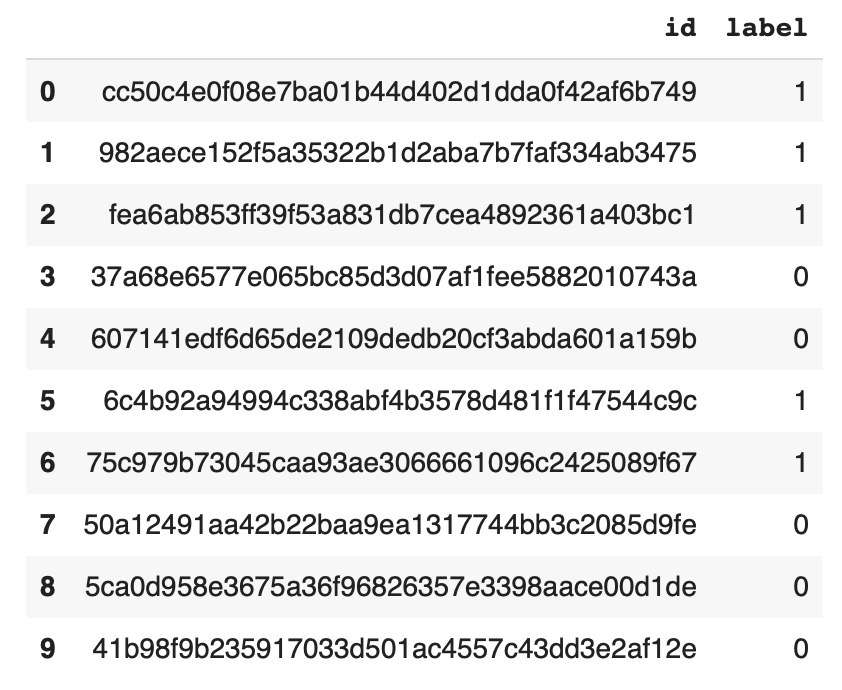

Train labels: As you may have already guessed, this file contains the correct answers to each image in a train set.

Test set: Set of images for which we do not know the answer.

Our goal is to create a new file (similar to train labels) containing predicted answers for images from the test set.

Game On!

For this opportunity, I decided to explore Google Colaboratory. It is a free Jupyter notebook environment with Python 3 that requires no setup and runs entirely in the cloud. If you haven’t used Jupyter before, now is a great chance to get started. With Jupyter notebooks, you can write and execute code, save and share it, and even access powerful computing resources, all from your browser.

We start our game by importing the files we need to Google Colaboratory. Here’s how: Remember to copy the imports as we will need them later.

We will use the Kaggle official API (you can read more about the setup and obtaining needed keys here) to easily retrieve large datasets directly to a server environment.

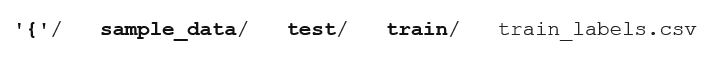

Now when you execute ls /content/, you should see:

We are going to read dataset into the pandas data frame.

The output should be as shown below:

Having all data in place we are ready to construct our first model.

![]()

The Sequential model is a linear stack of layers. The model needs to know what input shape it should expect. For this reason, the first layer (and only first) needs to receive information about its input shape. You can read more about the construction of Keras Sequential model here.

We need to declare some helper functions to transform the data to fit our needs (map train set with train labels).

Execute the code below and put on your favorite TV show … this will take some time to compile! Remember to change hardware accelerator to GPU. It’s free and can speed up model building time up to five times. Go to Runtime, then change runtime type and change None to GPU.

Data Science Endgame

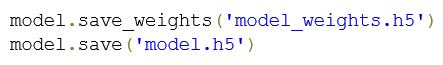

Before we generate predictions, we should probably preserve our model (better be safe than sorry).

The last step is to get the answers for the images in our test set. When all is computed we can see predictions for the first couple of images.

To save final results and download them run this:

It’s time to upload the files to Kaggle and wait to see our final percentage score.

Stepping Up Your Data Science Game

There’s nothing better than a little healthy competition. Since we are taking part in a real contest, we have to consider that there will be some winners and some losers. There’s no doubt that we want to be in the first group, but the model that we have trained so far does not guarantee this. We should improve the predictive power as much as possible (although watch out for overfitting).

There are many techniques to do that, including adding more data, algorithm tuning or ensemble methods. It’s easy to list them, but not so easy to pick the ones that actually will improve our score.

There are endless possibilities to increase the predictive power of this or any model. Pick a new dataset and try for yourself to expand your data science skills.