With video making up more and more of the media we interact with and create daily, there’s also a growing need to track and index that content. What meeting or seminar was it where I asked that question? Which lecture had the part about tax policies? Twelve Labs has a machine learning solution for summarizing and searching video that could make quicker and easier work for both consumers and creators.

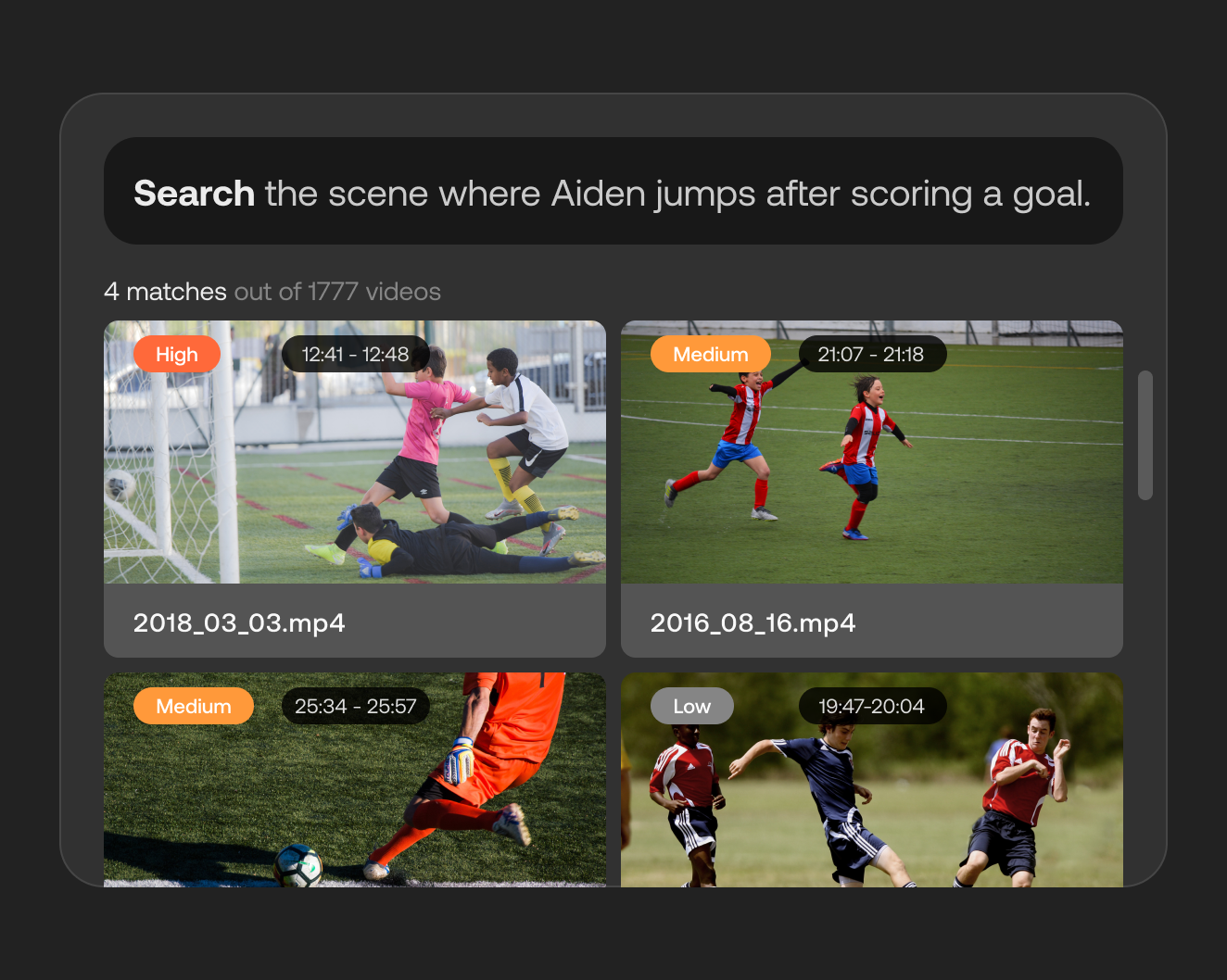

The capability the startup provides is being able to put in a complex yet vague query like “the office party where Courtney sang the national anthem” and instantly get not just the video but the moment in the video where it happens. “Ctrl-F for video” is how they put it. (That’s command-F for our friends on Macs.)

You might think “but wait, I can search for videos right now!” And yes, on YouTube or in a university archive you can often find the video you want. But what happens then? You scrub through the video looking for the part you were looking for, or scroll through the transcript trying to think of the exact way they phrased something.

This is because when you search video, you’re really searching for tags, descriptions and other basic elements that can be easily added at scale. There’s some algorithmic magic to surfacing the video you want, but the system doesn’t really understand the video itself.

“The industry has over-simplified the problem, thinking tags can solve search,” said Twelve Labs founder and CEO Jae Lee. And many solutions now do rely on, for example, recognizing that some frames of the video contain cats, so it adds the tag #cats. “But video isn’t just a series of images — it’s complex data. We knew we needed to build a new neural network that can take in both visuals and audio and formulate context around that; it’s called multimodal understanding.”

That’s a hot phrase in AI right now, because we seem to be reaching limits in how well an AI system can understand the world when it’s narrowly focused on one “sense,” like audio or a still image. For example, Facebook recently found that it needed an AI that paid attention to both the imagery and text in a post simultaneously to detect misinformation and hate speech.

With video, your understanding will be limited if you’re looking at individual frames and trying to draw associations with a timestamped transcript. When people watch a video, they naturally fuse the video and audio information into personas, actions, intentions, cause and effect, interactions and other more sophisticated concepts.

Twelve Labs claims to have built something along these lines with its video understanding system. Lee explained that the AI was trained to approach video from a multimodal perspective, associating audio and video from the start and creating what they say is a much richer understanding of it.

“We include more complex information, like relationships between items in the frame, connecting the past and present, and this makes it possible to do complex queries,” he said. “Just for example, if there’s a YouTuber, and they search ‘Mr Beast challenges Joey Chestnut to eat a burger,’ it will understand the concept of challenging someone, and of talking about a challenge.”

Sure, Mr Beast — a professional — may have put that particular datum in the title or tags, but what if it’s just part of a regular vlog or a series of challenges? What if Mr Beast was tired that day and didn’t fill in all the metadata correctly? What if there are a dozen burger challenges, or a thousand, and the video search can’t tell the difference between Joey Chestnut and Josie Acorn? As long as you’re leaning on a superficial understanding of the content, there are plenty of ways that it can fail you. If you’re a corporation looking to make 10,000 videos searchable, you want something better — and way less labor intensive — than what’s out there.

Twelve Labs built its tool into a simple API that can be called to index a video (or a thousand) and generate a rich summary and connect it to a chosen graph. So if you record all-hands meetings or skill-share seminars or weekly brainstorming sessions, those become searchable not just by time or attendees, but by who talks, when, about what, and including other actions like drawing a diagram or showing slides.

“We’ve seen companies with lots of organizational data interested in finding out when the CEO is talking about or presenting a certain concept,” Lee said. “We’ve been working very deliberately with folks to gather data points and interesting use cases — we’re seeing lots of them.”

A side effect of processing a video for search and, as a consequence, understanding what happens in it, is the ability to generate summaries and captions. This is another area where things could be improved. Auto-generated captions vary widely in quality, of course, as well as the ability to search them, attach them to people and situations in the video and other more complex capabilities. And summary is a field that’s taking off everywhere — not just because no one has enough time to watch everything, but because a high-level summary is valuable for everything from accessibility to archival purposes.

Importantly, the API can be fine-tuned to better work with the corpus it’s being unleashed on. For instance, if there’s a lot of jargon or a few unfamiliar situations, it can be trained up to work just as well with those as it would with more commonplace situations like boardrooms and standard business talk (whatever that is). And that’s before you start getting into things like college lectures, security footage, cooking…

On that note, the company is very much a proponent of the “big network” style of machine learning. Making an AI model that can understand such complex data and produce such a variety of results means it’s a large and computationally intense one to train and deploy. But that’s what’s needed for this problem, Lee said.

“We’re a big believer in large neural networks, but we don’t just increase parameter size,” he said. “It still has multi-billion parameters, but we’ve done a lot of technical kung fu to make it efficient. We do things like not look at every frame — a light algorithm identifies important frames, things like that. There’s still a lot of science yet to happen in language understanding and the multimodal space. But the purpose of a large network is to learn the statistical representation of the data that’s been fed into it, and that concept we’re a huge believer in.”

Though Twelve Labs hopes to help index much of the video out there, you as a user probably won’t be aware of it; aside from a developer playground, there’s no Twelve Labs web platform that lets you search stuff. The API is meant to be integrated into existing tech stacks so that wherever you normally would search through videos, you still will — but the results will be way better. (They’ve shown this in benchmarks where the API smokes other models.)

Although it’s fairly certain that companies like Google, Netflix and Amazon are working on exactly this sort of video understanding model, Lee didn’t seem bothered. “If history is any indicator, at large companies like YouTube and TikTok the search is very specific to their platform and very core to their business,” he said. “We’re not worried about them ripping out their core tech and serving it to potential customers. Most of our beta partners have tried these big companies’ so-called solutions and then came to us.”

The company has raised a $5 million seed round to take it from beta to market; Index Ventures led the round, with Radical Ventures, Expa and Techstars Seattle participating, plus angels including Stanford’s AI leader Fei-Fei Li, Scale AI CEO Alex Wang, Patreon CEO Jack Conte and Oren Etzioni of AI2.

The plan from here is to build out the features that have proven most useful to beta partners, then debut as an open service in the near future.

Comment