Testing autonomous vehicles on public roads is an expensive and time-consuming endeavor, and one that Raquel Urtasun, former chief scientist at Uber ATG, doesn’t think is the most expedient route to market. Waabi, Urtasun’s self-driving truck technology startup that launched last June, has come out with a key component of its strategy to scale its tech – Waabi World, a high-fidelity closed-loop simulator that doesn’t just virtually test Waabi’s self-driving software, but also teaches it in real time.

“Our simulator is immersive as well as reactive,” Urtasun told TechCrunch. “That means it can really mimic the world in all its diversity, beauty and fidelity, as well as automatically create scenarios and stress test the Waabi Driver, and also teach the Waabi Driver how to learn just by experiencing the simulator.”

Simulation as a form of accelerating the path to market is not new to the AV industry. Waymo, Cruise, Aurora, TuSimple, Tesla and others have all touted the benefits of using simulations made from real-world data to test their AV systems, particularly against made-up scenarios that the systems haven’t encountered and cataloged in the real world yet.

Urtasun said that’s great and all, but the current simulators being used in the industry “don’t provide what is really required to significantly reduce the number of miles that you need to drive in the real world in order to test and develop and deploy this technology.”

Waabi’s secret sauce? A simulator that can automatically build digital twins of the world from data, perform near-real-time sensor simulation, manufacture scenarios to stress test the Waabi Driver, and teach the driver to learn from its mistakes without human intervention, according to Waabi.

Most AV tech developers do some version of this, but Urtasun reckons Waabi has advanced the tech significantly and in a way that’s truly AI-first and rooted in automation. Let’s dive into more detail.

Creating a digital twin

Waabi’s competitors are tackling simulation by getting artists to create three-dimensional CAD models of the world and assign material properties for every object, like trees and buildings, Urtasun said. Those objects are either manually composed together to create a scene or the artists use automation techniques like procedural generation that combine human-generated assets and algorithms to create a bigger artificial world out of the little pieces.

Cruise, Waymo and Aurora have all confirmed that they follow a similar process to the one Urtasun describes.

“Our approach is very different,” said Urtasun. “We utilize AI to recreate digital twins from everywhere that we have driven. Every time that you have a vehicle driving, collecting data, we can recreate that and we can recreate that with super high fidelity and we only need to observe it once. So this technique scales so much better.”

In other words, Waabi World includes an AI system that takes the raw sensor data and automatically creates a digital twin with it – no artists or procedural generation needed.

Realistic sensor simulation

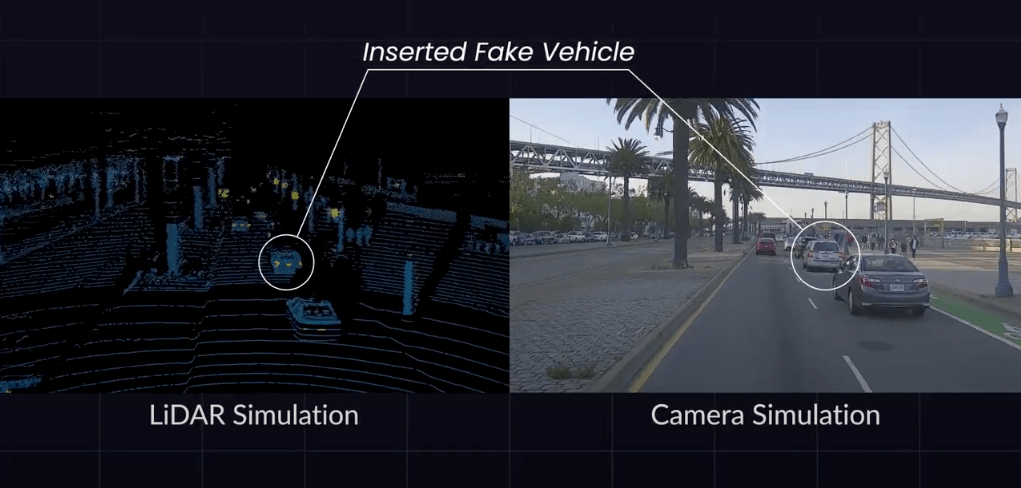

After creating the virtual environment for testing, Waabi has to simulate how the sensors on the trucks will observe a scene in the real world. Waabi uses a combination of AI and simple physics to enable faster and more realistic sensor simulations that allow for a more immersive simulator for the Waabi Driver, Urtasun said.

“The industry standard is to build a super sophisticated physics simulator, where in order for it to work, you need to know all the details about everything,” said Urtasun. “Where is the sun, where are the material properties of everything. They need a lot of computation to be able to create those simulations.”

Urtasun said Waabi World doesn’t need all that labeling of the surrounding environment. She uses a combination of simple physics to provide a rough idea of what the software stack sees through the sensors and AI with her new generation of algorithms to complete the picture.

“We don’t forget about physics, but we simply use it as a stepping stone towards the final simulation, and then you can scale and be much more realistic much faster,” said Urtasun.

Using AI to stress test the Waabi Driver

Most AV companies understand that part of the beauty of simulation is that you can create scenarios for the software stack that haven’t been recorded during real-life driving, which helps test the system against edge cases.

The industry standard so far still revolves around simulation teams finding a small set of scenarios that they observed in the real world or thought up in their minds and creating variations of them by varying the speed, acceleration, geography and starting conditions. This process is often done manually.

Waabi World uses AI to automatically generate those scenarios in a closed-loop simulation (meaning it’s reactive), and it chooses scenarios to test the Waabi Driver against by observing how the driver behaves in the world and understanding where the failures of the system are.

“Waabi World can generate scenarios that have a high probability of making the Waabi Driver fail,” said Urtasun. “So when the autonomy system is very good, you’re going to need millions and billions of scenarios in order for you to see a problem or mistake that this self-driving system might make. It becomes like finding a needle in the haystack.”

Learning in real time, like a human

Often in the AV industry, when a system is being tested, either in simulation or on the road, it’s not learning at the same time. The brain, or software stack, doesn’t evolve until it’s been updated with a new version of software, which has been tweaked by engineers after recording what sorts of mistakes the driver made and figuring out how to avoid them.

Waabi World has the ability to teach the Waabi Driver to drive automatically just by experiencing the simulations, Urtasun said.

“As the Waabi Driver is experiencing the world, Waabi World can tell the driver what mistakes it’s making, and then that driver can take the information and instantaneously update its brain to actually better handle the situations. That way you can continuously and automatically improve your software stack.”

This is similar to how humans learn, Urtasun said. We don’t wait for data to be collected and sent back to the servers, and then for engineers to decide which pieces they want to use for learning and update our brains. Rather, we experience something and instantaneously our brain rewires to be able to handle the situation better.

When it comes to testing on the roads, however, Urtasun said Waabi will not allow its driver to learn while driving, even though it has the capability to do so, for safety reasons.

“Without having a full verification of those new changes, you might potentially be introducing something dangerous,” she said. “You need to know before you put software on the road if it passes all the safety tests first.”

Will we get to see the Waabi Driver in action?

Presumably. Urtasun would not admit to having tested self-driving trucks on public roads at all, although Waabi had to get its initial data sets from somewhere. Instead, she said there would be more news coming soon.

Other competitors in the trucking space are already forging ahead a path to commercialization. TuSimple recently completed its first driver-out pilot on public roads. Waymo Via, Waymo’s trucking and freight unit, has signed on J.B. Hunt as its first self-driving freight customer when it hits the market within, it expects, the next few years.

Waabi may be a little late to the game, but if Waabi World is everything Urtasun says it is, the company is coming in hot.

“I’m not worried about being late,” said Urtasun. “On the contrary, I think we have perfect timing. Our simulator is the next innovation the industry really needs, so we can go really fast, and then you will see that progress on the autonomy front, as well.”

Waabi’s Raquel Urtasun explains why it was the right time to launch an AV technology startup

Comment