Rephrase.ai, a self-described synthetic media production platform, today announced that it raised $10.6 million in a Series A round led by Red Ventures with participation from Silver Lake and 8VC. CEO Ashray Malhotra says that the plan is to put the new cash toward expanding headcount with a particular focus on Rephrase’s engineering, data science, product and business teams.

Rephrase was founded in 2019 by Malhotra, Shivam Mangla and Nisheeth Lahoti. Since their early college days, Lahoti wanted to build a “text-to-movie” engine that could take a script or storyboard as input and generate a film, Malhotra tells TechCrunch. That proved to be too ambitious, so instead, the Rephrase team developed an AI system that creates avatars of human actors by mapping their faces, synchronizing their lip movements, and mimicking the tone and tenor of their voices.

“With video becoming the default, what today bottlenecks video creation is the time and cost spent on production,” Malhotra said via email. “This is the problem Rephrase aims to solve.”

Using Rephrase’s platform, a customer can select an avatar, background and voice, and enter text that the avatar will recite. They can then export that video for use in sales tools.

The tech isn’t particularly novel. Startups like Synthesia, Neosapience and Hour One rely on similar AI systems to create custom videos for a range of use cases. Newer rivals include China-based Surreal, which aims to develop an AI video editing system that can animate not just faces but also clothing and motions. Elsewhere, video- and voice-focused firms, including Respeecher, Papercup, Resemble AI and Deepdub, have launched AI dubbing tools for shows and movies. Beyond startups, Nvidia has been developing technology that alters video in a way that takes an actor’s facial expressions and matches them with a new language.

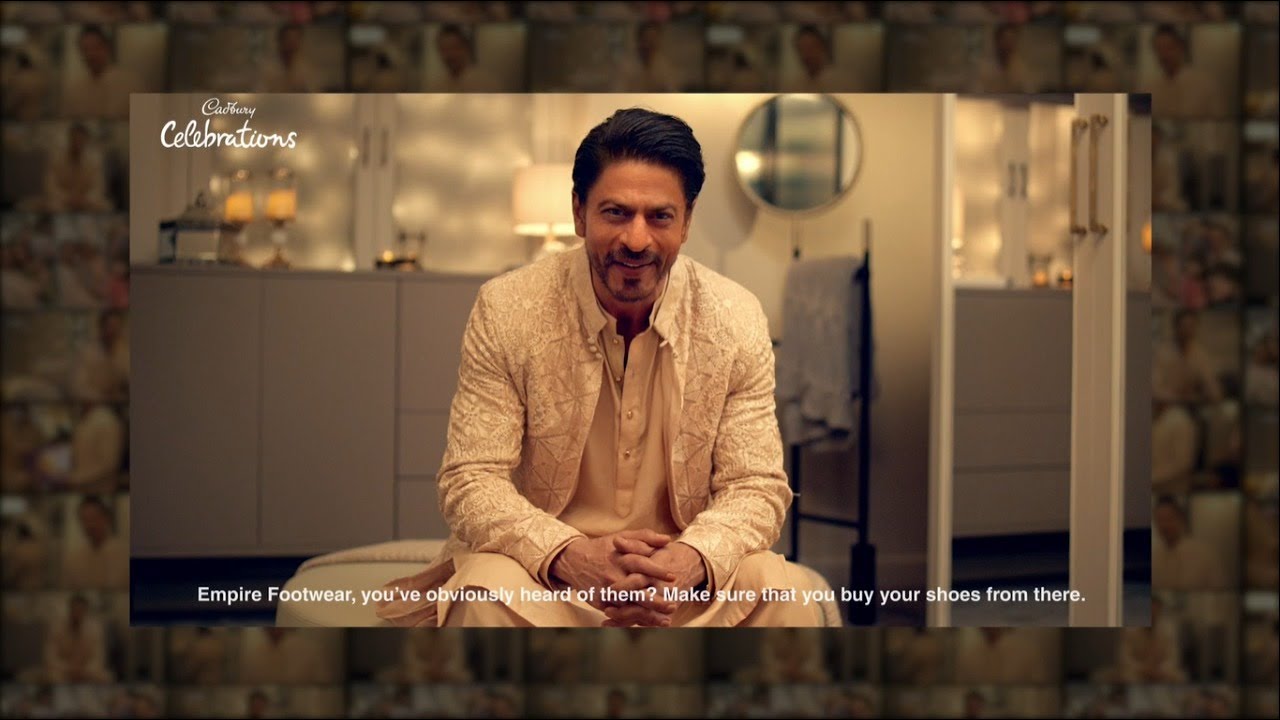

Rephrase has been aggressive in pursuing high-profile enterprise contracts, however, with a customer base that includes teams at Johnson & Johnson, Amazon, and Castrol. Mondelez India tapped the platform to record an avatar of Indian actor Shah Rukh Khan, which was used to create personalized ads in local stores across India.

“Since growing our sales team, we are focused on building vertical solutions for major industries like fintech, BFSI (banking, financial services, and insurance sector) and direct-to-consumer. Most of our revenue comes from large enterprises with over 1,000 employees, so this is a big focus area for us,” Malhotra said. “Rephrase’s growth comes at a time when many industries are looking for automated and scalable video solutions to business functions, especially sales and marketing. The COVID-19 pandemic has slowed traditional video production. Because real video creation is a tedious process, we are actually seeing more demand in terms of automated video creation.”

Synthetic media platforms raise all sorts of thorny ethical questions, of course, what with the rise of deepfakes and manipulated media. But Malhotra points to the company’s usage policy, which prohibits the use of Rephrase-created avatars, depending on the content of the videos in which they star. Customers — which must go through an approval process — control the copyright of any synthetic media that they create.

“Rephrase has designed its policies such that digital avatar creation is ethical by virtue of individual consent from the person concerned and relies on firsthand data of the concerned person,” Malhotra said.

San Francisco–based Rephrase has a team of 35 people and expects to hire around 35 more by the end of the year. To date, the startup has raised $12.5 million; Malhotra claims that it has roughly two years of runway.

Comment