Consumers and businesses are forever demanding faster and easier ways to get things done, and today a startup that is building tech to make that a reality using AI and the camera on your mobile device is announcing a big round of growth funding. Scandit — which uses computer vision to scan barcodes, text, ID cards or any physical object to trigger automated responses, provide analytics and more — has raised $150 million, a Series D that values the Swiss startup at over $1 billion.

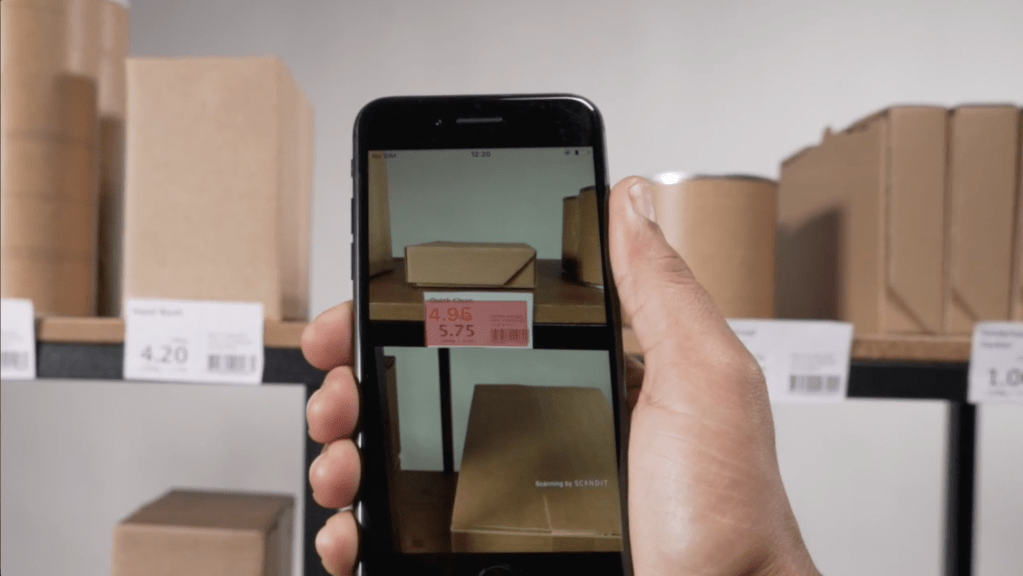

Scandit has made a name for itself with technology that can work on smartphones — meaning customers do not need to invest in more clunky and narrowly functional customized devices to tap into computer vision magic — but it also has been working on other applications of its technology, including in autonomous data capture, an area where it will also be putting some of this investment.

“We focus on enabling smart data solutions, which means any direct end user device whether it’s a smartphone or tablet, or a drone, anything that can use computer vision,” said CEO and co-founder Samuel Mueller in an interview. It also plans to use the funding to continue hiring more talent and expanding internationally.

Warburg Pincus led the round, with previous backers Atomico, Forestay Capital, G2VP, GV, Kreos, NGP Capital, Schneider Electric, Sony Innovation Fund and Swisscom Ventures all also participating. The company has now raised $300 million.

Since last raising money in 2020 — an $80 million Series C — Scandit has been on a roll. Annual recurring revenues have doubled (it doesn’t disclose actual figures). And it now has some 1,700 customers using its tech in a range of B2B and B2C services in verticals like retail, transportation and travel, manufacturing and logistics, healthcare and any use case where capturing an image of you or something else will spur another action. The list includes huge enterprises like the NHS, FedEx and L’Oréal, but also smaller apps, which are all getting up to speed with the times and how the working world works.

“There has been a sea change among enterprise customers looking at solutions like Scandit’s,” Mueller said, noting that eight out of the 10 bigger retailers in the U.S. are currently customers. “They’ve all moved away from traditional scanning equipment to embrace either smartphone-based data capture solutions, or BYO devices, because of lower costs and much more flexibility.” In retail, for example, one big driver he said has been the need for better real-time inventory data.

One other factor that may well have influenced this funding round and valuation is that Scandit’s core mechanics go beyond that of simple barcode reading. The startup is a spinout of the highly regarded computer vision department of ETH Zurich, and it currently has some 23 patents for its technology — eight granted and the rest working their way through the patent application process.

The opportunity that Scandit has identified and is addressing is one that spans all of our daily lives, whether we think about it consciously or not. As a population, many of us have grown used to things working automatically, a state of affairs made to feel ever more “natural”, more commonplace, thanks to technology that makes it so. That, in turn, is driving a faster pace of innovation to speed things up even more. Smartphones have had a massive role to play in this area, with sensors and fast data processing that authenticate us, help us look for and buy things and, of course, communicate with the world in all kinds of ways (text, audio, video) and through any number of channels, wherever we happen to be.

The cameras on these devices have been a critical component (pun intended) of the evolution. “In the blink of an eye” has become “in the click of a cameraphone.” That has opened the door for companies like Scandit to come in and make all that possible.

It could be argued that the very basics of computer vision as played out on a smartphone are commoditized these days, since even the most basic smartphones can capture QR codes and other objects with cameras in order to trigger other actions, or for image filtering and so on. Some of what Scandit is doing, though, is supercharging all of those processes. “The computer vision and machine learning we are doing is all on the edge” — that is, on the devices themselves — “so we have learned to deal with limited camera axes, low light and too-bright light, motion,” said Mueller. “Motion blur is one of the hardest. You have to be able to correct for that.”

Taking a production-centric approach to enterprisewide AI adoption

That begs the question, too, of how much Scandit has been talking to hardware and platform companies (for example, companies like Apple or Google or Microsoft, all very keen to drive deeper into enterprise use cases for its technology). Mueller declined to comment on that except to note that Scandit is one of Apple’s “approved mobility partners” and that it has go-to-market initiatives with Apple and Samsung.

For now, Scandit’s version of “smart” in smart data capture seems to be what is interesting investors, despite the fact that there are probably dozens if not more companies in the market offering their own take on image-based data capture. (They include MishiPay, Dynamsoft, Cognex, Blippar and others.)

Scandit’s smart data capture technology is transforming the way businesses operate and interact with their customers in an increasingly digital world and is strongly aligned with some of the biggest secular trends of our time, including enablement of the digital workforce and supply chain visibility,” said Flavio Porciani, MD at Warburg Pincus, in a statement. “Already used by leading enterprises across multiple industries, by customers and end users all over the world, we see a huge opportunity for Scandit to cement its position as the global leader in smart data capture. We are excited to have the opportunity to partner with the team at Scandit on the next phase of their ambitious growth strategy.’’

Comment