Headroom, a startup developing AI-powered software to make meetings ostensibly more efficient, today announced that it raised $9 million in funding led by Equal Opportunity Ventures with participation from Gradient Ventures, LDV Capital, AME Cloud Ventures, and Morado Ventures. CEO Julian Green said that the proceeds will be put toward product development and expanding the company’s workforce.

During the pandemic, virtual meetings became the de facto method of collaborating and connecting — both inside and outside of the workplace. The momentum isn’t slowing down. A 2020 IDC report projected that the videoconferencing market would grow to $9.7 billion in 2021, with 90% of North American businesses likely to spend more on it. But in an interview with TechCrunch, Green argued that videoconferencing as it exists for most companies today simply can’t replace the intimacy of small, focused meeting groups. He pointed to a Harvard Business Review survey, which revealed that 65% of senior managers felt meetings kept them from completing their own work while 64% said that they came at the expense of “deep thinking.”

“The legacy videoconferencing players are struggling to innovate across the disruptive shift from client-based messaging architectures to low-latency, hardware-accelerated, cloud-based real-time AI on real-time communication streams,” Green said via email. “There has been a slow rollout of AI features (e.g., captions, noise cancellation), even though all acknowledge that AI features are demanded by users and the future of virtual meetings.”

Alongside Andrew Rabinovich, Green launched Headroom (not to be confused with Max Headroom) in 2020 to address what he perceives as the outstanding blockers in the videoconferencing industry. Green was previously the director at Google’s experimental X division and co-cofounded Houzz, an online interior design platform. Rabinovich was the head of AI at Magic Leap, the well-funded augmented reality startup, and prior to that he was a Google software engineer focused on computer vision.

“[We] wanted to enable remote work by making meetings smart and making meeting information useful,” Green said. “Headroom competes with the fragmented videoconferencing and meeting tools that people patch together to join, take notes in and send recaps of meetings … Having a shareable institutional memory of meetings reduces meeting duplication, and repetition, which is a major drag on enterprise productivity and employee happiness.”

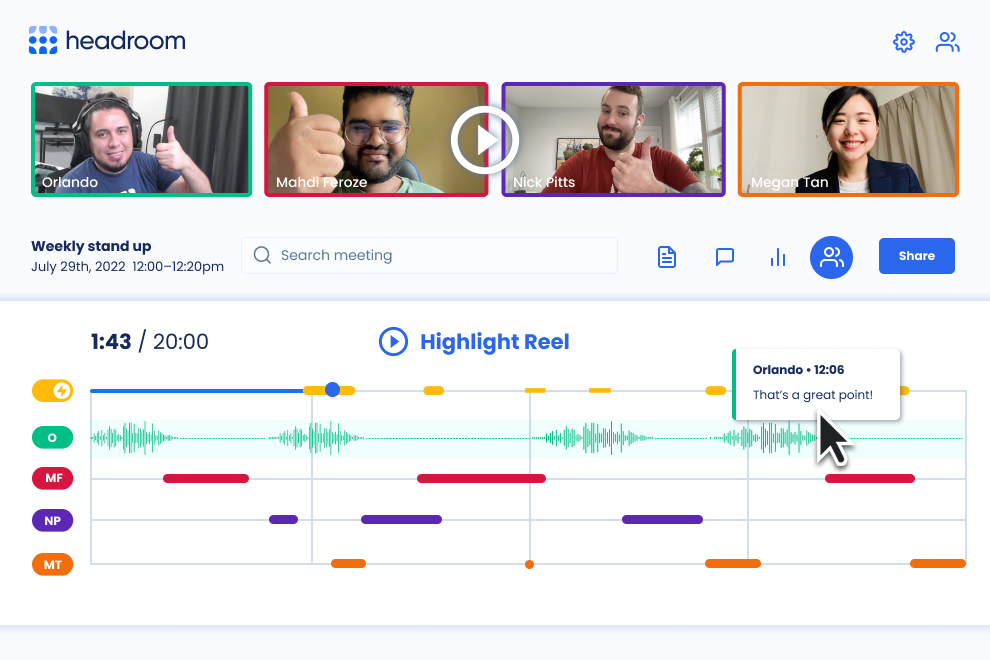

Headroom uses AI to power features like automatic transcripts and meeting summaries, which remain indexable after meetings with search filters for attendees, notes and topics. The platform offers full meeting replays and auto-generated highlight reels with key moments and action items, plus AI-powered upscaling and quick reactions like “thumbs up” and “wave” that participants can use during meetings.

But one of the more unique things about Headroom is its extensive analytics capabilities. The app tries to quantify “real-time meeting energy” by analyzing video, audio and text of and from various attendees. It even tracks eye movements and hand and head poses, attempting to figure out the sentiment in a person’s exchanges.

Sound a little dystopian? Perhaps. Setting that aside for a moment, there’s the matter of bias, which makes sentiment analysis an imperfect science at best. For example, research has shown that some of the datasets used to develop sentiment analysis algorithms associate words like “Black” with negative sentiments. The result is systems that are more likely to flag a Black person’s speech with problematic descriptors (e.g., “sad”) than a white person’s.

Advocacy groups generally aren’t bullish on sentiment tracking. When Zoom introduced it as a feature for sales training, 28 human rights organizations wrote an open letter to the company asking it to halt the software, calling it “discriminatory, manipulative [and] potentially dangerous” — and pointing out that it’s based on the flawed assumption that markers like voice patterns and body language are uniform for all people.

On the privacy front, Green said that only people who’ve been given access to the analytics data, like fellow meeting invitees or those with the appropriate permissions, can access it through Headroom. (Data is stored in the cloud; Headroom says it’s pursuing, but doesn’t yet have, SOC 2 Type II certification.) Meeting organizers can choose to restrict meeting information further among those they invite, and — perhaps most importantly — any user can request to have their data deleted “in all forms.”

Green said that combating bias is an ongoing area of study for Headroom as well, albeit a broad one. While revealing little about Headroom’s sentiment analysis technologies, he highlighted the platform’s efforts to use feedback from meeting participants to improve its various algorithms, including for meeting summaries.

“[Headroom quantifies] participant engagement with real-time wordshare, affecting computing and eye tracking to give all participants a chance to engage to ensure more equal and diverse meetings,” Green said. “Headroom’s approach to real-time meeting understanding is based on multimodal AI … Our model leverages inductive bias to disambiguate nuances and capture key moments in conversations.”

Headroom’s policies might not allay every would-be user’s fears. But one might argue that the larger threat to the company, at least right now, are household-name rivals like Microsoft Teams, Google Meet and Zoom. Nvidia threw its hat into the ring two years ago with Maxine, which makes heavy use of AI to deliver features like noise cancellation and face relighting. On the other end of the spectrum, startups like Fireflies.ai and Read.ai are taking the plug-in approach, integrating with existing videoconferencing platforms to drive meeting transcriptions and other “intelligent” features.

With a focus on growth over profit, San Francisco–based Headroom, which has 14 employees, has remained free and without usage or storage caps since launch. Green says that it currently has around 5,000 users — far short of Zoom’s hundreds of millions. But he stresses that (1) Headroom isn’t necessarily trying to compete with platforms like Zoom, preferring to focus on the small- and medium-sized business niche, and (2) it’s early days for the platform.

“The global pandemic, remote work, and now a hybrid workforce showed us everything that is wrong with meetings. No matter your company’s return to work policy, remote teams need better meetings and the ability to search, review and share meeting information,” Green said. “The Headroom team experimented throughout [the pandemic] on how to make meetings better for remote teams, and is flexible to virtual, hybrid, in person, synchronous and asynchronous. A hybrid workforce is the new norm, so Headroom will continue to deliver a platform that evolves with where and how people are working.”

Comment