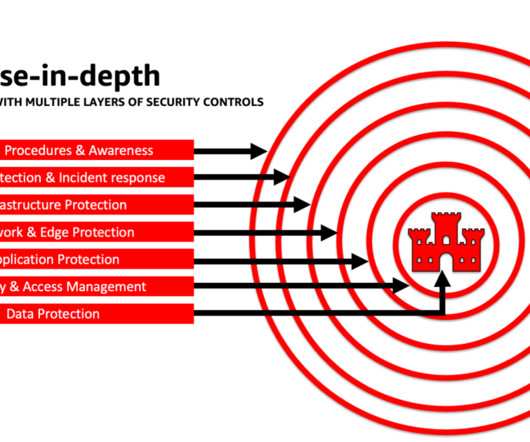

PREVENT Use Cases: Identifying High Impact Attack Paths

Darktrace

FEBRUARY 22, 2023

This blog explains the benefits of thinking like an attacker and modelling attack paths in order to understand where you need to invest your defenses.

prevent-use-cases-identifying-high-impact-attack-paths

prevent-use-cases-identifying-high-impact-attack-paths  Blog Related Topics

Blog Related Topics

Darktrace

FEBRUARY 22, 2023

This blog explains the benefits of thinking like an attacker and modelling attack paths in order to understand where you need to invest your defenses.

Palo Alto Networks

APRIL 19, 2024

We performed forensic investigations to identify the root cause of the vulnerability, understand the exploited payload tactics, and determine various options to enable protections in our product. This enabled the attacker to store an empty file with the attacker's chosen filename. How Was It Exploited?

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

Tenable

MARCH 8, 2024

More than 40% of ransomware attacks last year impacted critical infrastructure. Plus, a survey shows how artificial intelligence is impacting cybersecurity jobs. 1 - FBI: Critical infrastructure walloped by ransomware attacks in 2023 The number of U.S. Meanwhile, MITRE updated a database about insider threats.

Lacework

OCTOBER 27, 2023

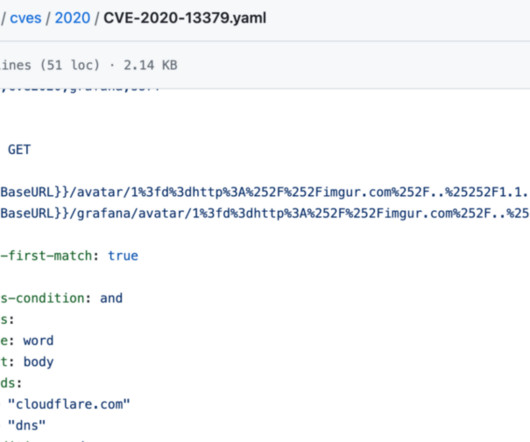

Over the past several weeks, Lacework has observed a significant uptick in AWS instance credential compromises that were traced to Grafana server-side request forgery (SSRF) attacks. This blog describes this tactic, indicators to look out for in your environment, and how Lacework composite alerts will help you identify potential compromises.

Prisma Clud

AUGUST 30, 2023

In today’s post, we look at action pinning, one of the profound mitigations against supply chain attacks in the GitHub Actions ecosystem. It turns out, though, that action pinning comes with a downside — a pitfall we call "unpinnable actions" that allows attackers to execute code in GitHub Actions workflows.

CircleCI

JANUARY 13, 2023

We encourage customers who have yet to take action to do so in order to prevent unauthorized access to third-party systems and stores. How do we know this attack vector is closed and it’s safe to build? How do I know if I was impacted? How do we know this attack vector is closed and it’s safe to build?

AWS Machine Learning - AI

JANUARY 26, 2024

Specifically, this post seeks to help AI/ML and data scientists who may not have had previous exposure to security principles gain an understanding of core security and privacy best practices in the context of developing generative AI applications using LLMs. We then discuss how building on a secure foundation is essential for generative AI.

Let's personalize your content