Inferencing holds the clues to AI puzzles

CIO

APRIL 10, 2024

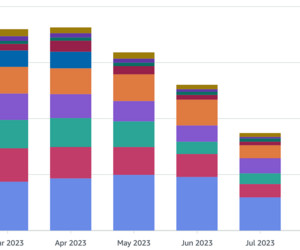

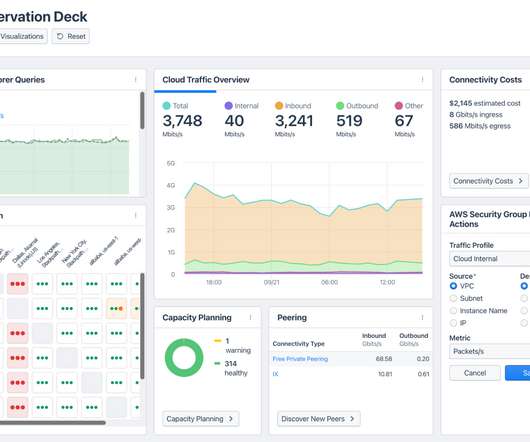

With the potential to incur high compute, storage, and data transfer fees running LLMs in a public cloud, the corporate datacenter has emerged as a sound option for controlling costs. Because LLMs consume significant computational resources as model parameters expand, consideration of where to allocate GenAI workloads is paramount.

Let's personalize your content